The rapid rise of artificial intelligence has transformed how people search for information, create content, and run businesses. Yet as generative AI tools become more powerful, a pressing question has moved to the center of public debate: Is AI stealing content from websites? Publishers, artists, journalists, and tech companies are now grappling with complex legal and ethical challenges surrounding how AI systems are trained and how they generate content.

TLDR: AI systems are trained on massive amounts of online content, sparking debate over whether this process amounts to theft or fair use. Website owners argue their copyrighted material is used without permission or compensation, while AI developers claim the training process is transformative and legally compliant. Courts are only beginning to address these disputes, leaving significant legal uncertainty. The outcome could redefine copyright law in the digital age.

- How AI Systems Use Online Content

- Is It Theft or Fair Use?

- Ongoing Lawsuits and Legal Precedents

- Ethical Concerns Beyond the Law

- Impact on Publishers and Website Owners

- Global Regulatory Approaches

- Industry Perspectives

- Potential Solutions and Compromises

- The Future of Copyright in the Age of AI

- FAQ

How AI Systems Use Online Content

Modern generative AI models are trained on enormous datasets that may include books, articles, forums, and other publicly accessible web pages. During training, algorithms analyze patterns in language, images, or code to learn how to generate new, coherent outputs.

Importantly, developers argue that AI models do not store exact copies of websites. Instead, they process data statistically, identifying patterns and relationships between words or pixels. However, critics contend that even if content is not stored verbatim, the use of copyrighted works without consent raises legal and ethical concerns.

From a technical standpoint, training involves:

- Data collection: Gathering publicly available text, images, or code.

- Preprocessing: Cleaning and organizing data into machine-readable formats.

- Model training: Running algorithms that detect patterns across billions of data points.

- Fine-tuning: Adjusting outputs to align with desired behavior and safety standards.

The controversy arises primarily during the data collection phase, where copyrighted website content may be included.

Is It Theft or Fair Use?

The legal heart of the issue lies in copyright law. Copyright protects original works of authorship, granting creators exclusive rights to reproduce and distribute their material. But the doctrine of fair use provides exceptions for transformative uses such as commentary, research, and parody.

AI companies often argue that training models constitutes fair use because:

- The process is transformative, turning source material into statistical representations.

- The output does not typically reproduce entire copyrighted works.

- The training process benefits the public by enabling innovation.

On the other hand, publishers and content creators counter that:

- Their work is used without permission or compensation.

- AI-generated outputs may compete directly with original content.

- Some outputs closely resemble or summarize proprietary material.

Whether courts ultimately view AI training as fair use remains an open and evolving question.

Ongoing Lawsuits and Legal Precedents

Several high-profile lawsuits have been filed against AI developers, alleging copyright infringement. Plaintiffs include news organizations, authors, visual artists, and stock image providers. These cases focus on two key issues:

- Whether scraping publicly available website data for training violates copyright law.

- Whether AI-generated outputs constitute derivative works.

Legal experts suggest that outcomes may hinge on:

- The degree of transformation applied during training.

- Whether AI outputs substitute for the original work.

- The commercial nature of AI systems.

Historically, U.S. courts have sided with technology companies in some transformative use cases, such as search engines indexing webpages. However, generative AI introduces a more complex dynamic because it can create content that resembles human-created material in structure, tone, and specificity.

Ethical Concerns Beyond the Law

Even if certain uses of website content are deemed legal, ethical concerns persist. Many creators feel exploited when their work contributes to AI systems that may undermine their income streams.

Major ethical questions include:

- Consent: Should creators have the ability to opt in or opt out of AI training datasets?

- Compensation: Should websites and artists receive royalties?

- Attribution: Should AI systems disclose which sources influenced their outputs?

Some technology companies have begun negotiating licensing agreements with publishers and stock content platforms. These agreements may offer a path forward, allowing AI innovation while compensating content owners.

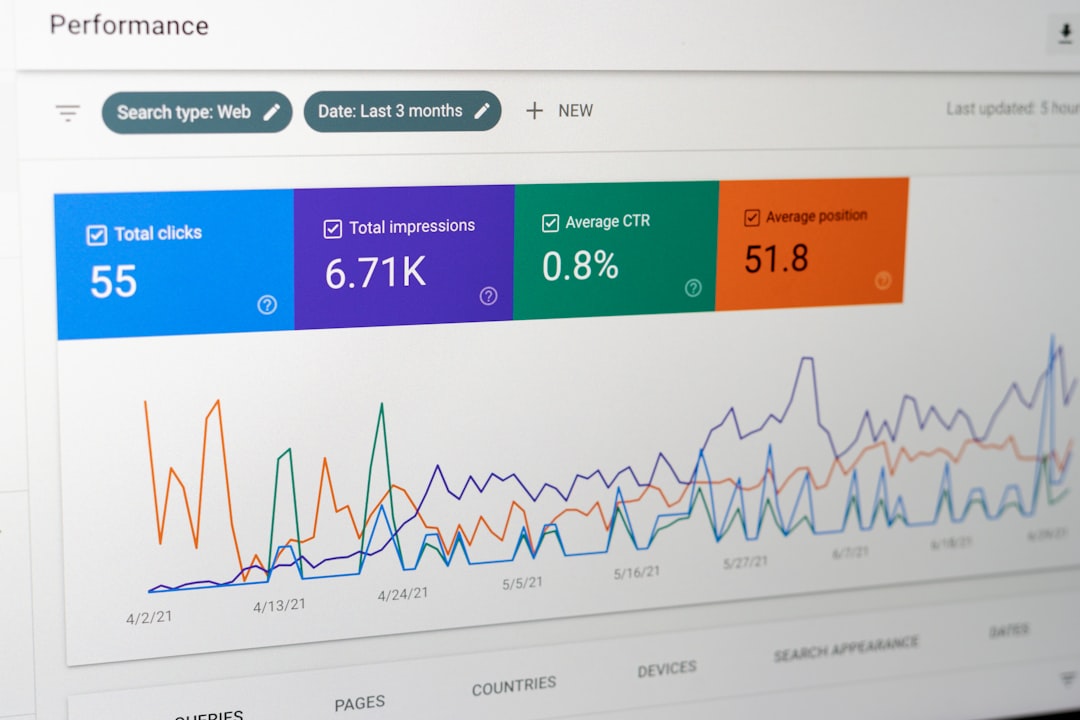

Impact on Publishers and Website Owners

Publishers argue that generative AI tools can reduce website traffic by providing direct answers to users’ queries. If fewer users visit original websites, advertising revenue and subscription growth may suffer.

This shift has led to concerns such as:

- Declining search engine referrals.

- Loss of ad revenue.

- Increased competition from AI-generated summaries.

In response, some websites are implementing technical measures like robots.txt restrictions to block AI crawlers. Others are pursuing collective bargaining strategies or supporting legislative reform.

Global Regulatory Approaches

Different countries are approaching AI regulation in varied ways.

- United States: Relies heavily on existing copyright law and court interpretation.

- European Union: The EU AI Act and related copyright directives emphasize transparency and potential opt-out mechanisms for data mining.

- United Kingdom: Has explored broader text and data mining exceptions but faced pushback from creative industries.

This patchwork of regulations creates uncertainty for global tech companies, which must navigate compliance across multiple jurisdictions.

Industry Perspectives

The debate often falls into two camps.

Technology Companies’ View:

- AI training is comparable to human learning from publicly available information.

- Restricting training data could stifle innovation.

- Broad access to information fuels economic growth.

Content Creators’ View:

- AI training uses copyrighted material at unprecedented scale.

- Lack of consent undermines intellectual property rights.

- AI-generated content may dilute originality and market value.

Some stakeholders advocate for a middle ground involving structured licensing systems or collective rights organizations to ensure compensation without halting technological progress.

Potential Solutions and Compromises

Experts have proposed several solutions to address the tension between AI development and content ownership:

- Opt-Out Databases: Central registries where creators can refuse inclusion in training datasets.

- Revenue-Sharing Models: Compensation structures similar to music streaming royalties.

- Transparency Requirements: Disclosing general categories of training data sources.

- Stronger Technical Controls: Improved crawler identification systems.

Each solution carries trade-offs. For example, strict opt-in systems may limit dataset diversity, while revenue-sharing models require reliable tracking mechanisms that do not yet exist at scale.

The Future of Copyright in the Age of AI

The outcome of current legal battles could reshape copyright doctrine for decades. Courts may clarify whether AI training is a permissible transformative use or whether explicit licenses are required.

If lawmakers intervene, new legislation could:

- Define AI-specific copyright standards.

- Mandate data transparency.

- Create compensation frameworks for creators.

At its core, the issue reflects a broader societal challenge: balancing technological innovation with protection of intellectual property. How governments and courts resolve this tension may determine whether AI evolves as a collaborative tool or a disruptive force in the content economy.

FAQ

-

Is training AI on publicly available websites illegal?

Not necessarily. Many AI developers argue that scraping publicly available data qualifies as fair use, but courts are still evaluating this issue. Legal interpretations may vary by country and specific circumstances. -

Does AI copy and store entire articles?

Generally, AI models do not store full copies of articles in accessible form. Instead, they learn statistical patterns. However, rare instances of output resembling source material have fueled controversy. -

Can website owners block AI from using their content?

Website owners can use technical tools such as robots.txt files to restrict certain AI crawlers, though enforcement may not be universal or foolproof. -

Are creators compensated when their work is used to train AI?

In most cases, no automatic compensation occurs. However, some AI companies have entered licensing agreements with publishers and media organizations. -

Will new laws be created specifically for AI?

It is possible. Policymakers around the world are considering AI-specific regulations that address data usage, transparency, and copyright protections.

Leave a Reply